Last week, the RBA increased interested rates claiming there was a growing capacity constraint (even…

A structured approach for progressive political ambitions – Part 5

This is Part 5 of the short series of briefing notes that arose out of discussions I recently had in London about how a progressive political party might want to break out of the shackles that the British Labour Party has bound itself in with its obsession with fiscal rules and an adherence to the fiscal fictions of mainstream macroeconomics. The thoughts, in my view, are relevant for all aspiring progressive political parties that might have fallen prey to the fictional world of mainstream economics and cannot find a way back. In the first part, I suggested a way forward was to shift the focus of what can be done with fiscal policy away from financial matters towards an emphasis on real resource constraints – that is, what productive resources are available for public use. In this sense, the discussion becomes focused on how much nominal spending growth is possible without sparking inflationary pressures as a result of nominal spending growth outstripping the productive capacity of the economy. In Part 2, I focused on aspects of the institutional structure that should be considered to support that shift in focus, including a planning network and a return to a public employment service. In Part 3, I began an examination of the long debate about economic planning, In Part 4, I continued that discussion. In Part 5, I am discussing how the age of rapid, networked communication systems eliminate the basis of the pro-market, anti-planning critics. Today’s discussion is a practical description of how cybernetics can help deal with resource constraints in a planning system.

Earlier posts in the series:

1. A structured approach for progressive political ambitions – Part 1 (March 2, 2026).

2. A structured approach for progressive political ambitions – Part 2 (March 9, 2026).

3. A structured approach for progressive political ambitions – Part 3 (March 16, 2026).

4. A structured approach for progressive political ambitions – Part 4 (March 23, 2026).

Project Cybersyn

The British academic – Stafford Beer – was a researcher at Manchester University in the areas of – Operational research – and – Management cybernetics.

He was an early contributor in the 1950s to the first computer system – Ferranti Pegasus – applying management cybernetics (while at United Steel in the Britain).

The Ferranti computers were manufactured in Manchester.

Its precursor was the Ferranti Mark 1, which was installed at the University of Manchester in 1951.

You can read about that it in this article – How a 70-year-old ‘Baby’ changed the face of modern computing (published June 21, 2018).

It includes pictures.

It was the reason that the famous codebreaker – Alan Turing – went to work at the University and “where he published some of his most influential work on Artificial Intelligence.”

As an aside, one of the surviving Pegasus machines was on display at the – Science and Industry Museum, Manchester – until it was shifted to another archive.

But a replica of the ‘Baby’ is on display at the Science_and_Industry_Museum, see – Meet Baby.

When I was a PhD student at the University of Manchester in the 1980s I used to visit either the Kilburn Building on Oxford Street or the Coupland Building (behind the student union on Oxford Street), I can’t quite remember which, and see the old computers on display.

The huge vacuum tubes that powered the systems were magnificent as were the roof-high towers of components.

The computing power on my iPhone exceeds these systems but they were still magnificent.

Anyway, Stafford Beer.

To continue the aside, Stafford Beer lectured in management cybernetics at the University of Manchester and I attended a guest lecture there that he gave in the early 1980s on his experience with the so-called – Project Cybersyn – which was really interesting.

In 1971, Stafford Beer was invited by – Ferdinand Flores – who later became Salvador Allende’s Finance Minister, but in his earlier role was in charge of Project Cybersyn, to develop a “distributed decision-support system to aid in the management of the national economy”.

This was to help the nation moved down “the Chilean road to socialism”, which was built on the idea that workers would participate directly and in real-time in the planning process.

Stafford Beer was called in to create the information system that would allow the planning process to be efficient (reduce wastage) and give voice to those in the factories.

The system would allow the shop-floor knowledge held by workers (the ‘informal knowledge’) to be fed into the central planning process quickly which would lessen the chance of major mismatches between demand and supply occurring.

As I noted in the earlier parts of this series, a major criticism against economic planning (particularly from the likes of Ludwig von Mises and Friedrich Hayek) was that Market Socialism could not deliver efficient economic outcomes because the essential signals from the price system would not be available to bring supply and demand together across the array of goods and services.

The critics argued that central planning would fail because it could not know what consumers wanted and could not match lowest-price production to the demand.

Stafford Beer believed that applying the principles of management cybernetics would overcome the informational deficiencies that made a central planning system difficult to implement effectively.

There was a fascinating chapter written by Hermann Schwember in 1977 – Cybernetics in Government: Experience with New Tools for Management in Chile 1971–1973 – which was included in the volume – Concepts and Tools of Computer-assisted Policy Analysis (edited by Hartmut Bossel) and published by Springer Basel AG.

Herman Schwember was one of the key Project Cybersyn designers.

The Herman Schwember chapter is on pages 79-138 and is essential reading if you can find it.

He was formerly a Professor of Fluid Mechanics at the Catholic University of Chile and went into exile in London after the Coup.

As an aside (another one), this 1981 article by Hermann Schwember and Jerome Bear – Free Market, unfree thought: General Pinochet’s prescription for Chile’s universities – is a major attack on the economics of the Pinochet dictatorship and the repression of the Chilean university system.

Also interesting reading.

The problem that Project Cybersyn sought to address was summarised by Hermann Schwember in this way (p. 81):

Given a complex system called nationalized industry, subject to very fast changes (size, product design, price policies, etc.), inserted in a broader system (the national economy, inserted in turn in the whole of the national socio-political life). and subject to very specific political boundary conditions, it is required to develop its structure and information flow in order that decision-making, planning and actual operations respond satisfactorily to a program of external demands and the system remain viably.

His chapter provides the theoretical basis for the design of the computer system and network.

The design of Project Cybersyn was intended to provide “a balance between autonomy and dependence” mediated through complex feedback loops.

If you are interested in the deep thinking involved then please read his chapter which I have made available on my server.

I found his discussion about how each factory was analysed and presented a flow-chart describing all the information flows to be really fascinating and helpful.

Project Cybersyn:

… consisted of four modules: an economic simulator, custom software to check factory performance, an operations room, and a national network of telex machines that linked to a single mainframe computer

The state-run enterprises would transmit via telex real-time information back to the central planning office.

The central computing software would “monitor production indicators, such as raw-material supplies or high rates of worker absenteeism”.

Information was to be fed into to allow the government to “forecast the possible outcome of economic decisions”.

The principles of management cybernetics were applied to the design, which would have allowed the “decision-making power within industrial enterprises to their workforce to develop self-regulation of factories.”

In terms of the emphasis on real resource constraints, Hermann Schwember’s discussion is really applicable.

On Page 111, he talks about a ‘canning plant’ and how the team went about designing the information system for it as a prototype.

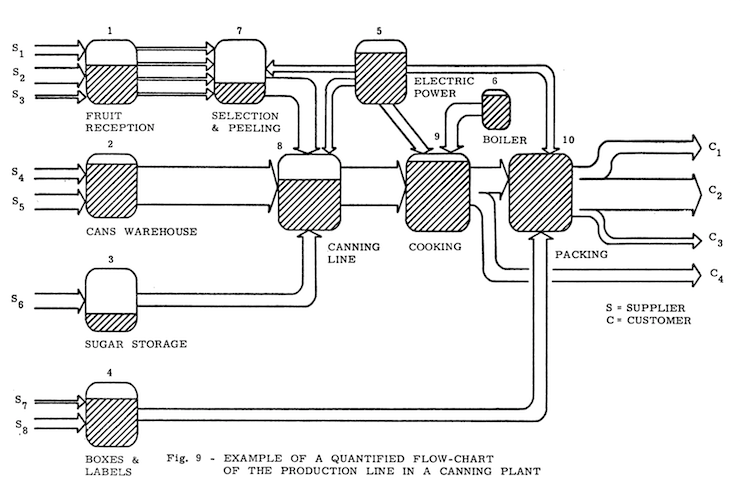

This flow-chart appears on Page 112 of his chapter and describes the production line of a fruit and vegetable canning factory, that they were integrating into Cybersyn.

This “level of information” was considered “adequate” for the operation manager to understand in real-time the processes necessary to keep the factory humming along efficiently.

That is, it could help the worker management “understand the basic inputs, processes and outputs, to detect bottle-necks … and to provide a starting point for tactical planning (for instance, increase of can warehousing, cooking and packing facilities,

and boiler capacity).”

The “arrow widths are intended to show the relative importance (on a merely qualitative basis) of the respective processes” and “the levels inside each box are indicative of the ACTUALITY of the respective unit.”

Actuality refers to the planned output levels for the coming 12 months.

The information generated at the plant level was to be much more comprehensive than that relevant at the national level to avoid drowning in data.

The flow-chart led to the development of key factory-level indicators, that bear on the issue of real resource constraints:

1. Which supplies are critical? Is there one that will determine the behavior of the whole system, for instance tin cans or sugar? Are their levels of stock reflected immediately on production or are there significant time-lags? Should we measure incoming values or levels at the warehouse?

2. Among the processes, is there a critical point relevant for the whole plant behavior? For instance, daily production, or number of batches going through the sterilizing retorts? If there are several relevant points, could one obtain a balanced picture with just very few points? For instance, kilograms of peeled fruit, number of cans to the canning line, vapor consumption? Or is this information merely redundant?

3. Are there indirect measures of efficiency? For instance, amount of fruit rejected in the selection stage or amount of garbage thrown from the peeling section?

4. Is there any machine that will by itself provide the indicator, the counter of the sealing unit or the integrating clock of the boiler?

5. What about the final product? Is there any need to keep overall balances of different products by kind, size, and quality, or is there one or just a few that will provide essentially the same information? Do we need rather to keep track of the deliveries to a particular customer, due to his size or to the importance of his purchase for other relevant production units?

And more.

He pointed out that the canning factory was a much more straightforward application than the more complicated manufacturing processes involving, for example copper and brass wires.

But the canning factory was used as the prototype as to how many indicators would be sufficient to ensure efficient production within available material resource constraints.

His discussion then centred on what would happen if the plan required:

… a 20% expansion of the cooking unit (in order to satisfy a certain goal of social needs allocated to this company)

Once again the system was designed to deal with real resource constraints:

1. What new investment would be necessary (in boilers)?

2. What technological change could be exploited to improve productivity with existing capital?

Project Cybersyn was designed to map the desires of the policy makers (the politicians) into the realities of the available resources.

Could such an expansion be currently accommodated?

If not, what time frame would be possible?

And this single factory question would be mapped into all the plans of all the factories and fed into a reconciliation process at the national level.

When the CIA-Coup unfolded on September 11, 1973, Project Cybersyn was terminated.

The Chicago Boys took over with Milton Friedman and wrecked the country.

While the photos of the Cybersyn control room look somewhat bizarre now (53 years later) the reality is that the work that Stafford Beer was doing way back then is exactly what modern corporations are doing every day albeit with more advanced technology.

The cybernetic principles to collect and assess complex information in real-time are the same.

This New Yorker article by Evgeny Morozov (October 6, 2014) – The Planning Machine – is a contemporary discussion of the Project.

Stafford Beer knew that “the planning problems of business managers—how much inventory to hold, what production targets to adopt, how to redeploy idle equipment—were similar to those of central planners.”

His system was designed to find and eliminate supply chain bottlenecks – which trucks were where, etc

Interestingly, in 1960, Stafford Beer spoke at a conference where he outlined his approach to dealing with information feedback loops and system control, and none other than Friedrich Hayek “complimented him on his vision for the cybernetic factory”.

It is ironic (tragic) that after the 1973 Coup, Hayek became an advisor to General Pinochet.

The famous Chilean folksinger – Ángel Parra – write a song dedicated to the Project Cybersyn in 1972 entitled Litany for a Computer and a Baby About to Be Born, although feminists have pointed out since that the allusion of a pregnancy was fraught given that women were not really involved in Chilean decision-making at the time.

Anyway, a brief musical segment:

Conclusion

I thought Hermann Schwember’s reflection on the use of computers in this context was worth repeating:

There might be people opposing these suggestions on the mistaken grounds that it would be a new application of computers to manipulate people. This would be once more the wrong presentation of the issue. Computers can be always, and are very often, used to manipulate people. This does not depend so much on the social context, political values and participation mechanisms. The realities of the Chilean experience faced many times the issue of efficiency, allocation of resources, critical priorities, qualification of the labor force and so on. This will necessarily happen in any complex system. The difference between the many possible social models is one of the normative values and not of technicalities. Therefore, the issue will be settled at the level of rational and feasible political options and not at one of technicalities. Much less will it be helped by any form of iconoclasm, either from the extreme right or the extreme left.

In the next episode we will consider the case study of the Ministry of International Trade and Industry (MITI) in Japan, which provides a good blueprint for how a political party taking office might implement a planning process.

That is enough for today!

(c) Copyright 2026 William Mitchell. All Rights Reserved.

This series is great. What to do the day after the revolution. Many activists are short on answers in terms of means while quite good on ends. This kind of discussion is very helpful. So much of my 80s and 90s childhood was full of narrative of how the Soviet bloc fell not really because of political repression but because people just wanted western jeans, pop music and fast food. Even if fundamental needs were met it was the sayisfaction of frivolous wants that won the battle. Aside from development of the human soul to not want frivolous consumption, how can a collectivist society respond to consumer preferences better. I doubt anyone is immune from enjoying consumption. Hence the Che Guevara t shirt.

Bill have you seen this?

Americans (Still) Support a Federal Jobs Guarantee

https://jacobin.com/2026/04/federal-jobs-guarantee-supermajority-support